I’m confused about how Originality AI evaluates content that’s been run through humanizer tools. Some of my articles are being flagged as AI even after I edit them myself, and I’m not sure what I’m doing wrong or how this impacts rankings. Can anyone break down how these humanizer reviews work, what signals they look for, and what I should change in my writing process so my content passes as genuinely human?

Originality AI Humanizer review, from someone who spent too long testing this thing

I went into this one with some expectations. Originality is known for its AI detector, so I figured their humanizer would at least know how to dodge their own kind of system.

Didn’t happen.

I pushed a bunch of samples through the Originality AI Humanizer here:

https://cleverhumanizer.ai/community/t/originality-ai-humanizer-review-with-ai-detection-proof/27

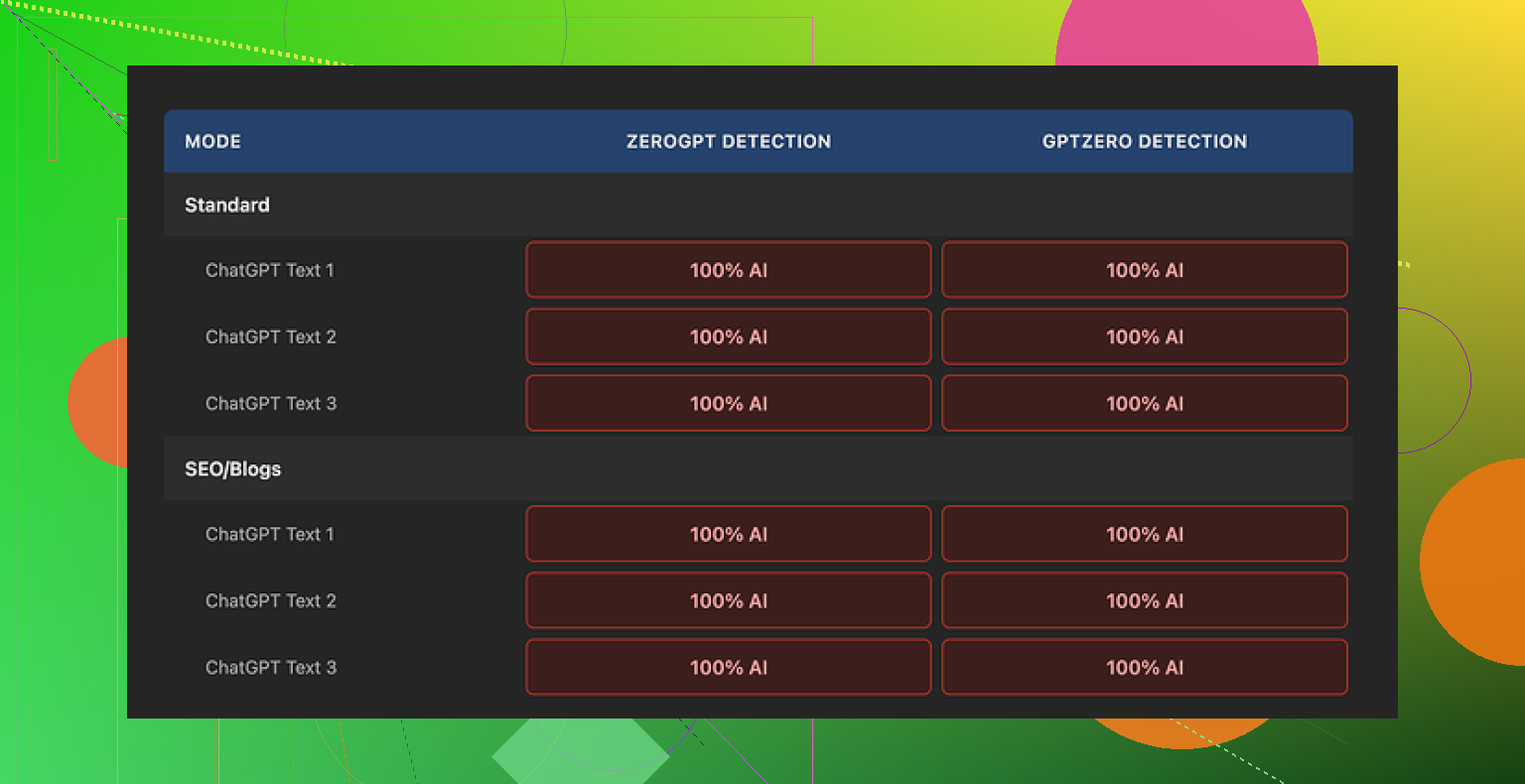

Then I ran every output through GPTZero and ZeroGPT.

Result: 100% AI every single time. No partial scores. No mixed output. Standard mode and SEO/Blogs mode both flagged as fully AI across all tests.

How much did it change the text

Short answer, almost not at all.

I dropped in typical ChatGPT style content and compared input versus output side by side. Here is what I kept seeing:

• Same sentence structure.

• Same favorite AI phrasing left untouched.

• The classic overused words still there.

• It even kept em dashes, which most detectors love as a pattern clue.

Sometimes it would tweak a word or two or stretch a sentence, but the “voice” stayed identical. If you blindfolded me and showed me before and after, I would not be able to tell which one was “humanized” without looking at the pixel-level differences.

So any attempt to rate the writing quality of the output turns into rating the original AI model, not the humanizer. The tool is barely doing anything that affects how detectors see the text.

Screenshot for context:

What worked fine

To be fair, some parts of the product are OK if you treat it as a small text rewriter, not as a detector bypass:

• It is free to use and you do not need an account.

• There is a 300 word cap per run, but I got around it by using multiple incognito windows and chunking text. Annoying, but workable.

• The length slider does what it says. If you want slightly longer output, it will expand it. Still AI-ish, but longer.

• Their privacy policy reads like a lawyer wrote it, not a bot. They state that your content might be used for AI training, but you get a retroactive opt-out, which is rare for these tools.

So for someone who wants a quick rephrase or expansion and does not care about detection, it functions. It is just not what it markets itself toward.

Why it fails as a “humanizer”

From what I saw:

• No strong changes in rhythm or syntax.

• No effort to strip out obvious AI tells.

• No deliberate variation in structure or tone.

• No visible pattern-breaking that would confuse detectors.

Detectors look for consistent patterns, and this thing keeps those patterns almost intact. That is why GPTZero and ZeroGPT both stomped it with 100% AI on every trial I ran.

It feels more like a thin tool put on top of their brand to pull search traffic, then push people over to their detection products.

So if your goal is:

“I need text that survives AI detection for school, freelance work, or publication.”

This will not help you. You will still get flagged.

What I ended up using instead

After going through a pile of these humanizers, the one that performed noticeably better for me was Clever AI Humanizer. Their write-up and test set is here:

In my own tests it scored higher on quality and detection bypass, and it is also free. I am not saying it is perfect, but it did not return 100% AI on every check like Originality’s tool did.

Quick takeaways if you are skimming

• Originality AI Humanizer leaves your text almost unchanged.

• GPTZero and ZeroGPT both flagged all my samples as 100% AI.

• Standard vs SEO/Blogs mode made no difference in detection.

• It is free, no login, but capped at 300 words per run.

• Has a length slider and a decent privacy policy with opt-out for training.

• As an actual humanizer for bypassing AI detection, it fails.

• Feels more like a funnel to their paid detector ecosystem than a serious solution.

If you only need light rewriting, it works about as well as asking ChatGPT to rephrase something. If you need detection-safe text, you should not rely on this one.

Originality’s detector does not look at “humanizer used or not”. It looks at patterns in the text. If those patterns still look like model output, it flags you, even if you spent time editing.

What I see from your description:

- Humanizer tools rarely fix the real issue

Most “humanizers” lightly rephrase. They keep:

• Sentence rhythm the same

• Word choice patterns

• Predictable paragraph structure

• Overuse of certain connectors and qualifiers

So the detector still sees high predictability. That is what @mikeappsreviewer found when he tested the Originality AI Humanizer. It changed almost nothing in a way that matters for detectors.

- Manual edits often do not go far enough

A few word swaps or some extra adjectives will not move the needle. To shift a detection score, you need changes on several levels:

• Structure

– Break and merge sentences.

– Change paragraph order.

– Shift where the “point” of each paragraph sits. Start with a concrete detail, not a summary.

• Information pattern

– Add specific personal references or context that a generic model would not guess.

– Include small, checkable details from your own experience or workflow.

– Remove generic filler like “on the other hand”, “it is important to note”, “in today’s world”, etc.

• Style

– Use short and long sentences mixed, not a mid-length stream.

– Change default transitions. Use things like “so”, “then”, “next up”, “here is the catch”, instead of textbook connectors.

– Introduce your own verbal tics that you naturally use when you talk.

If you edit while staring at the original AI text, you will tend to preserve its skeleton. That skeleton is what the detector hammers.

- Originality AI has a bias on certain content types

Long, well structured, very “clean” informational posts about common topics get flagged more. Even if a human wrote them. For example:

• Topic: generic how to guides

• Perfect spelling, no small quirks

• Very steady sentence length

• Balanced paragraph sizes

This pattern screams “model” to current detectors. So if your natural style is “clean blog content”, you will get caught often, even if you never touched a humanizer.

- What you can do differently in practice

Here is a simple workflow that tends to lower scores across tools without turning your life into a full time rewrite job:

Step 1: Start from your own outline

Even if you used AI to get ideas, rewrite the outline in your words. Move points around. Combine or delete sections. Do this before you touch the body text.

Step 2: Rewrite each paragraph without looking at it

Read one paragraph once. Hide it. Then write the same point from memory, in your normal way of speaking. You will automatically change structure and diction.

Step 3: Insert “you-only” content

In each section, add at least one thing that came from your life, data, or process. For example:

• “In my last 20 client audits, the only pages that passed Originality were…”

• “When I tested this on a 3k word SaaS review, the score stayed at 80% AI until I did X.”

Models tend to avoid narrow, grounded details like that, or they produce them in a different style.

Step 4: Remove AI-sounding padding

Search your text for:

• “on the other hand”

• “it is important to note”

• “in today’s digital age”

• “overall” at the start of a summary sentence

Replace them with more direct phrasing or cut the sentence if it adds nothing.

Step 5: Change tempo and format

Originality seems sensitive to monotony. To break it:

• Use bullet lists only when needed, not every section.

• Throw in a one word sentence if it fits.

• Ask a short question and answer it right away.

• Mix 5 word and 25 word sentences.

- About using other tools

If you still want help from tools, treat them as rough assistants, not magic filters.

Clever Ai Humanizer, which @mikeappsreviewer mentioned, tends to alter structure and rhythm more than Originality’s own humanizer. That helps a bit with detection, especially when you then do your own pass on top. Do not paste in 3k words and trust the output. Run shorter chunks, then rewrite again in your own voice.

- What you might be “doing wrong”

Common mistakes I see when people try to beat Originality:

• Editing only at word level, not at structure level.

• Relying on one humanizer pass, no manual cleanup.

• Keeping generic intros and conclusions that read like a template.

• Using the same tone for every article, such as “formal blog voice”.

• Writing only about SEO safe topics that models have seen a million times.

If you want to test whether your edits work, do this:

• Take a 400 word section.

• Run Originality, note the score.

• Do the “read once, hide, rewrite from memory” method.

• Add one or two personal or data points.

• Run it again and compare.

You will see how sensitive the tool is to structure and specificity. That helps you train your own editing instincts instead of depending fully on any humanizer.

Yeah, this is exactly where a lot of ppl are getting tripped up with Originality.

The big thing most folks miss: Originality is not “checking for humanizer usage,” it is just looking at textual patterns. If your text still behaves like model output, it does not matter how many tools you ran it through or how many “humanizer” badges you slapped on it.

Quick points based on what you wrote and what @mikeappsreviewer / @viaggiatoresolare already covered:

- Humanizers are usually surface-level

They both already showed that Originality’s own humanizer barely changes anything important. I’ll add something slightly different: a lot of those tools are constrained on purpose. If they go too wild, people complain the output is “off topic” or “too weird.” So they end up:

- Preserving the same paragraph logic

- Preserving the same overall flow

- Only swapping synonyms or shuffling clauses

From a detector’s perspective, that is still dead obvious. Humanizers want you to feel like it changed a lot. Detectors do not care how you feel.

- Manual editing that does not move the “probability needle”

You said you edit the text yourself and still get flagged. Common pattern I see:

- You keep the AI-generated intro and outro intact

- You follow the same sequence of subheadings the model created

- You just “massage” sentences instead of restructuring entire sections

That is closer to “post processing an AI draft” than “writing your own article.” Detectors are tuned exactly for that hybrid style right now.

I slightly disagree with the idea that you always have to rewrite paragraphs completely blind. That works, but it is overkill for some ppl and not sustainable at scale. Instead, try this:

-

Kill the generic intro and conclusion every single time

If the first paragraph starts with stuff like “In today’s digital landscape” or “With the rise of…” just delete it and write your own hook from scratch. Same for the final “In conclusion” wrap up. -

Replace AI section ordering with your own logic

Even if you keep the same points, reorder them based on how you would explain the topic to a friend or a client. Detectors pick up on that textbooky, perfectly linear ordering a lot more than people realize. -

Inject “decision points”

Real humans contradict themselves, hedge, or change direction mid-article. Add small moments like “Honestly, I tried X first and it did not move the needle at all, so I switched to Y.” Models tend to stay too smooth and consistent.

- Topical bias is killing some legit writers

Where I 100% agree with what was said: certain topics and tones are just cursed. If you write:

- Listicles on basic SaaS tools

- Super polished “ultimate guides” on generic topics

- Long, evenly formatted explainers without any edge

You are in the worst possible overlap with what LLMs spit out all day. Even if you are fully human, Originality will tilt heavily toward “AI-like” because the shape of your content lives in that space.

If this is your niche, your best bet is to:

- Lean into opinion and stance

- Use examples from your own clients, projects, or data

- Be willing to sound slightly less “corporate perfect” and more like an actual person with preferences

- Humanizers as assistants, not shields

Where I differ a bit from some of the takes: I do think tools can still be useful, but only if you treat them like a rough help, not a cloaking device.

For example, Clever Ai Humanizer is decent as a first pass to knock the obvious “ChatGPT voice” off a block of text. But what actually matters is what you do after:

- Run a chunk through Clever Ai Humanizer

- Immediately read it out loud and mark anything that still sounds robotic or over tidy

- Rewrite or chop those parts aggressively

If you stop at the tool output and paste it into Originality, you are basically begging to get a “likely AI” label. Same problem as with the Originality humanizer, just with a slightly better baseline.

- Why your stuff is still being flagged

Putting it together, most likely:

- You are keeping the same structure the AI created

- Your edits are stylistic, not structural

- You might be writing in a very “AI-favored” topic and tone

- You are trusting that “I ran it through a humanizer, so I’m safe” which is exactly what the detectors are evolving against

If your goal is to actually reduce the AI score instead of playing whack-a-mole:

- Own the skeleton of the article yourself

- Use tools like Clever Ai Humanizer as a helper, not a finish line

- Brutally delete generic sections instead of trying to patch them

- Add concrete, lived details that an LLM would not default to

It sucks, but the reality right now is: if the article reads like it could have been written by an AI trained on polished blog content, Originality will keep flagging it, no matter how many “humanizer” badges you run it through.